One Year Later, We’re Still Figuring Out What To Do With ChatGPT

Is OpenAI’s ChatGPT a search engine replacement, second brain, or speculative asset?

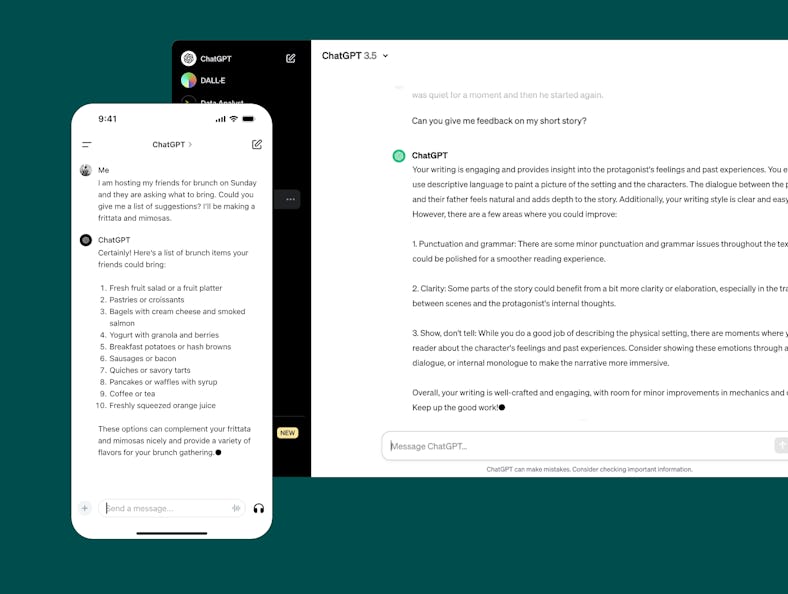

It’s no exaggeration to say that it has been an absolutely wild year since OpenAI released ChatGPT, its all-purpose chatbot, to the world on November 30, 2022.

Running initially on a modified version of the company’s GPT-3.5 large language model (LLM), ChatGPT could produce surprisingly fluid responses to prompts, but regularly offered up false information, limited in part by a dataset that ended in 2021.

Flash forward to 2023 and the chatbot is now used by 100 million people a week and OpenAI has released multiple updates to support web browsing, document parsing, and image generation, including using an entirely new model called GPT-4 that’s noticeably improved ChatGPT’s performance.

None of that happened in a vacuum, of course. AI features have proliferated all over consumer tech products since ChatGPT’s launch, whether they're made by Google, Microsoft, or the dozens of startups looking for a piece of the pie. Many of them also happen to use OpenAI’s models, even the ones that power ChatGPT. But looking past all of that “progress,” and the several new zeros behind OpenAI’s valuation, it’s no more clear what the ultimate purpose of ChatGPT is than why AI matters at all. A lot of that has to do with overwhelming and unrealistic expectations generative AI is burdened with to inflate its value.

A New Search Engine

You can now chat with Microsoft Copilot or Bing, just as easily as you used to search.

First, though, it’s worth considering the initial consumer-facing idea generative AI was saddled with: reinventing search. Microsoft is on the hook for billions as part of its multi-year partnership with OpenAI, largely, it now seems, because GPT-4 is at the core of the “new Bing” and now Copilot for Windows 11. Integrating a chatbot into Bing didn’t change the quality of its search results, but it did vastly improve the experience of receiving them, offering a natural language way to get information, and eventually complete all sorts of other smaller tasks like making reservations. It wasn’t a complete success — Bing reportedly only controlled 6.47 percent of U.S. market share in July 2023 in comparison to Google’s hold over more than half of the rest — but it signaled a larger push at Microsoft to make AI a core feature of all of its products going forward.

Bing wasn’t the only new search experience, of course. Google’s Bard, while a separate URL from vanilla search, similarly tried to capitalize on the benefits of chatting about information rather than combing through search results. Google didn’t stop with Bard either, it also introduced the Search Generative Experience or SGE that added Bard-esque summaries directly above results, and Duet AI, a chatbot that lived inside Google Workspace apps that’s capable of the same kinds of text and image generation of ChatGPT and DALL-E.

All of these moves at rethinking search — including The Browser Company’s recent plan to direct some search queries in its Arc browser to ChatGPT rather than Google — are an attempt to correct the reality that Google just doesn’t produce useful results in the way it used to. There are multiple related reasons for this, including Google’s own ad business, but it’s hard to say chatbots are an ideal alternative. They’re better at producing specific answers, but they also pose an existential threat to the people who currently produce the information they rely on, like writers, researchers, and journalists who also need the web traffic chatbots could steal.

A Second Brain

Notion’s AI can now answer question about just about anything you have stored in your Notion workspace.

From the beginning, a key advantage of these chatbots has been their ability to understand context. They could remember things you told them at the beginning of a conversation, and incorporate it into later responses. Using a large language model to digest and parse through large amounts of data has quickly become one of the more promising uses for them.

Tools like NotebookLM, Fabric, and Dropbox let you ask questions about the documents and files you upload to them, helping you understand things you might not have read or remembered. Notion’s AI feature does the same, and bundles in creative tools for generating text on the fly. All of these, save for Google’s highly experimental NotebookLM, require additional subscriptions, but their ability to simplify the job of managing a lot of information makes it easy to understand their appeal.

Should we really be recording ourselves and others all of the time just for the magic trick of not forgetting something?

Even wilder AI ideas are being explored with wearables. Devices like the Tab or the Rewind Pendant take the idea of ingesting as much information as possible to the extreme by recording the world around you almost constantly, and then letting you talk with a chatbot about what you heard or said. As you might guess, the open question with these devices and all of the apps I listed above is whether they’re truly private and secure. What information is safe and what information is shared? Should we really be recording ourselves and others all of the time just for the magic trick of not forgetting something?

For a concerning recent example, look no further than ChatGPT, which according to 404 Media was able to be tricked into revealing gigabytes of training data, including private information scraped from the internet, with the right prompt. Considering LLMs are trained on giant datasets from all over the web, that information could very well be out of date, but it is surprising how easily the chatbot just spits it up.

A Creative Engine

DALL-E 3 can produce highly-detailed images.

A final possible future for generative AI is as a creative companion or replacement. OpenAI’s DALL-E 3 is well known for being able to create highly detailed images in a variety of styles on the fly. They can’t consistently shake the fact that they look artificial in one way or another, but they’re dramatically better than they were even a year ago. Services like Pika promise to do something similar with video, offering seemingly endless amounts of customization in terms of style and content.

ChatGPT now has DALL-E 3 built-in, but the chatbot caused a stir even without image-generating abilities in the world of academia. There was a mini-crisis in schools across the U.S. because of the popularity and accessibility of ChatGPT. A crisis that was overblown — because even if AI-written essays looked and sounded good, they made no argumentative sense — but still revealed a real uneasiness around the idea of passing off text from a generative AI as human.

Hype Smokescreen

All of these use cases are valid, and the average AI evangelist would likely say that the flexibility is the point, but is ChatGPT, or the models that power it like GPT-4 or DALL-E 3, actually good at any of these skills? Like, good enough to start completely rethinking existing products in the way Google and Microsoft have? My answer is no, but that’s not the consensus by far.

OpenAI CEO Sam Altman’s removal and reinstatement as head of OpenAI was breathlessly covered two weeks ago for a variety of reasons: it seemed random, it looked bad for everyone involved (especially Microsoft), and it may have been because of some kind of AI safety concerns. That last point stands out because, to me, it seems like the root of all of this. OpenAI has, in ways big and small, suggested it can create an artificial general intelligence (AGI), a true AI that learns and thinks as a human does — a claim that has been uncritically accepted by most everyone. Not only is it unclear if that’s even possible or if it’s something we’d want, but there isn’t consensus on what that would actually look like. Even OpenAI’s definition of AGI as “autonomous systems that surpass humans in most economically valuable tasks” leaves plenty of wiggle room.

ChatGPT is an exciting, but still clearly fallible tool, not a glimpse of some perfect AI future.

Combined with the constant doom and gloom that Altman and OpenAI spread about how dangerous AI is — all the more important that they be given as much leeway and funding to do it safely — it creates a perfect mix to pin unrealistic hopes and investments too. That’s an even better black box than anything crypto or the metaverse could offer, because so far it’s actually produced semi-useful experiences people like. All of OpenAI’s products are viewed through the lens that if they’re not changing history, they will soon. This is a company that just announced an app store for custom GPTs. Why aren’t we viewing their products as products? ChatGPT is an exciting, but still clearly fallible tool, not a glimpse of some perfect AI future. A year since its launch, it’s a shame we haven’t learned that.