How the Webb Telescope will reveal invisible features of the universe

Even in space photography, it's all about filters.

After weeks of traveling through space to reach its observational perch, NASA’s James Webb Space Telescope (JWST) beamed down its first image of the cosmos.

Jospeh DePasquale, senior data imaging developer at Space Telescope Science Institute, did some minor stretching of the image to bring out more of the detail of the star in the constellation Ursa Major known as HD 84406.

The Webb team chose this star because it is easily identifiable and not crowded by other stars of similar brightness.

“That's really like an engineering image that was taken to help understand the current state of the mirrors and their alignment,” DePasquale tells Inverse. “That's pretty much what we got directly out of the telescope.”

DePasquale has been working on processing images captured by the Hubble Space Telescope for the past five years, and will be doing the same with JWST.

As the $10 billion dollar telescope continues the months-long process of aligning its primary mirror, DePasquale is anxiously waiting for more images to make the one million-mile journey to Earth.

“It's going to be an interesting challenge because we are moving beyond wavelengths of light that our eyes are sensitive to,” DePasquale says. “But the same principle is going to apply in terms of how we choose the colors of the data.”

Images captured by space telescopes go through a rigorous process to produce the final result that inspires awe among spaces fans all over the world, translating intricate data into colors that the human eye is capable of seeing.

With the recent launch of the telescope, astronomers are anticipating the release of detailed images that capture the essence of the universe from its distant galaxies, alien worlds, and burning stars. But how do we go from raw images of the depths of space to stunning, lively photos of fiery nebulae and swirling winds on distant planets?

How does James Webb capture images?

JWST is engineered to detect light outside of the visible range, producing images of the faintest and most distant objects.

The telescope will be looking at the cosmos in wavelengths outside of what the human eye can observe. JWST’s infrared capabilities will help it stare right through opaque areas of space, capturing the universe in wavelengths that would not be possible to capture from Earth.

The telescope sports a 21-foot-wide, 4-inch-thick tiling of 18 beryllium mirrors coated in gold, acting as one large mirror.

For its first run, Webb generated 1,560 images of a bright, isolated star. The images were then stitched together to make for one large mosaic, with each of the primary mirror’s segments captured in one frame.

Planetary scientist James O’Donoghue explains that when you take a picture in infrared, what you get back is a two-dimensional image of various numbers.

“The image is formed of pixels which are assigned some color from zero to 100, where zero would be black and 100 would be white,” O’Donoghue tells Inverse. “And each one of those corresponds to the brightness of the image.”

The image is first calibrated to produce the cleanest possible version of the raw data, and then it is colored in.

“Now, the colors are not inherent in the data,” DePasquale says. “Those are added after the fact, but they are based on information contained within the data.”

The instruments of a telescope are only sensitive to the intensity of light hitting its detector, but the telescope has filters that can be applied to let in only a specific wavelength range, or color, of light.

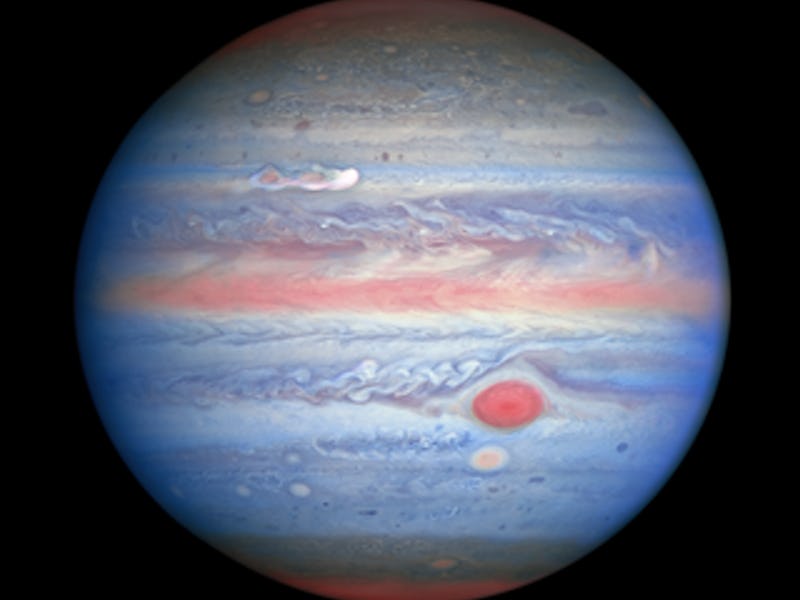

An example of an image constructed from 7 broad-band filters all the way from ultraviolet (left) to infrared (right).

“If we're going to be building up a color image of a galaxy, we would put maybe a red filter in front of the detector and take an image in red and swap that out for a green filter and do an image in green light and then swap that out for an image in blue light,” DePasquale says.

The different images are then combined together to create one comprehensive image. Image developers then decide which colors to apply based on the filters that were used.

Paul Byrne, a professor in Earth and Planetary Sciences at Washington University in St. Louis, says that the decision-making process behind applying the colors is partly based on the data, while another part of it is to produce an image that’s aesthetically pleasing.

“Very often, telescopes and even spacecraft imaging systems acquire data at wavelengths beyond what humans can see,” Byrne tells Inverse. “And so there's an element of subjectivity in how those data are visually presented. The colors do signify something, but sometimes the specific colors don't matter as much.”

As an example, the planet Venus is quite boring in visible light.

NASA’s Parker Solar Probe captured this view of Venus in visible light.

When we think of Venus, we mostly have this image of the scorching hot planet in a firey-hue as it shines in golden light in most space images. However, as Marina Koren at The Atlantic recently pointed out, without much color added, Venus appears mostly in a drab, grey color.

Instead, engineers give Venus that orange hue to somewhat resemble the color of its surface beneath the orange–yellow sky.

“Of course, not every image uses red, green, and blue and so we do have to make some decisions,” DePasquale says. “We may have an image that only uses two filters and so how do we combine those in a way that creates a beautiful color image?”

Most objects in space emit colors that are too faint for the human eye to see. Sometimes, scientists are forced to assign colors to filters that cannot be seen with the human eye.

DePasquale is an amateur photographer himself, and compares it to the process of editing photos of Earthly objects where you choose to enhance one color and highlight another.

“The beginning part of the process is very quantitative,” DePasquale says. “And then as we transition into applying colors and deciding what the analogy, the contrast and the composition of the image is going to be, it moves from quantitative to qualitative and it starts to take on more of an aesthetic approach.”

But it’s more than just a pretty picture. The Hubble Space Telescope has really transformed the way most people view space, delivering incredible images of cosmic objects that have captured people’s imagination for the past 30 years.

“Hubble really opened up everything,” O’Donoghue says. “Before the 90’s, there weren’t a great deal of amazing images, but Hubble kind of exploded with hundreds of them and we all got kind of used to it — I mean, we take it for granted.”

With the launch of JWST, scientists are expecting richer data, and much sharper images.

The telescope is designed to look back at when the universe began, peering at ancient galaxies that formed shortly after the Big Bang. So while it will be delivering on those images, the greater benefit is really finding out more clues about the beginning of the universe.

“I think you can grab people's attention with an image, but the story will be the biggest draw,” O’Donoghue says.

The engineers waiting to work on the images from Webb are prepared to push the envelope, but realize that the pure aesthetics alone may not live up to people’s expectations. Especially with Webb’s design to look at further objects in space such as ancient galaxies or distant exoplanets, the results may not always be cosmic works of art.

“Webb is the successor of Hubble, so people are going to expect that everything it produces is going to be 100x better,” DePasquale says. “But the reality is not going to be like that, and the data it produces is not necessarily going to be aesthetically pleasing all the time.”

This article was originally published on